JD Vance condemns sexualised AI imagery as unacceptable

JD Vance condemns sexualised AI imagery as unacceptable

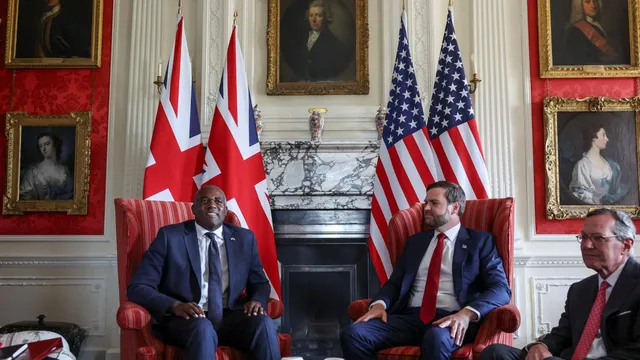

- During a recent meeting, David Lammy raised concerns about Grok's use in creating harmful imagery.

- JD Vance expressed his agreement with Lammy that such manipulation is unacceptable.

- The incident has sparked a broader debate on AI ethics and the need for regulatory action.

Story

In early January 2026, during a meeting in Washington, David Lammy, the UK Deputy Prime Minister, expressed grave concerns to JD Vance, the US Vice President, regarding the misuse of Grok, a generative AI tool developed by Elon Musk's company xAI. This technology has been implicated in creating deeply concerning sexualized images of women and children, including child sexual abuse imagery. Lammy highlighted the abhorrent consequences of this new technology, emphasizing how it has allowed individuals to produce disturbing deepfake content without consent. Vance acknowledged the seriousness of the situation, agreeing that the manipulation of such images is utterly unacceptable. He sympathized with the UK’s position on these issues, recognizing the need for vigilant action against technologies that exploit individuals, particularly vulnerable populations like children. The meeting underscored the escalating dialogue between the US and UK regarding the ethical implications of AI technology and the urgent need to address its responsible use. Following the meeting, the Internet Watch Foundation reported that criminals had been using Grok to violate the dignity of children through the creation of abusive imagery. Reports indicated that X (formerly known as Twitter) had altered Grok's settings, restricting image manipulation capabilities to paid subscribers in an effort to mitigate the potential for abuse. However, these changes were criticized as insufficient, with UK authorities emphasizing that stronger measures were necessary to address the exploitation facilitated by AI tools such as Grok. Both Vance and Lammy seemed keenly aware of the rising public alarm over such AI-generated content, which has spurred governmental and regulatory demand for accountability from tech companies. The discussions were framed within a broader concern about free speech rights versus the ethical boundaries of technological advancements. While Elon Musk defended the platform against accusations of censorship, UK officials indicated that they would support regulatory actions to ensure compliance with local laws safeguarding citizens from such content.

Context

The current regulations on AI technology are evolving rapidly due to the increasing integration of artificial intelligence into various sectors including healthcare, finance, and transportation. Internationally, there is a growing consensus on the need for a regulatory framework that ensures the ethical development and deployment of AI systems. Many countries are exploring legislative measures to address critical issues such as data privacy, accountability, bias in decision-making, and the potential impact of AI on employment. As AI technologies advance, regulators strive to strike a balance between fostering innovation and protecting public interest. In the European Union, the proposed AI Act represents one of the most comprehensive efforts to regulate AI technology. This framework categorizes AI applications into different risk levels, from minimal to grave risks, and imposes respective obligations on developers and users. High-risk AI systems are subject to strict requirements including transparency, compliance with fundamental rights, and assurance of safety and efficacy before they can be put into operation. Similar initiatives are emerging in other regions, signaling a global movement toward comprehensive governance of AI. At the same time, ethical guidelines developed by organizations such as the OECD and UNESCO emphasize principles like fairness, accountability, and transparency in AI use. These guidelines serve as a reference for governments and companies, pushing for responsible AI development that aligns with human rights standards. Significant discourse surrounds the concerns of algorithmic bias, particularly regarding how training data can perpetuate existing inequalities. Regulatory measures aim to address these issues through continuous monitoring and audits of AI systems to ensure adherence to ethical standards. As of now, the regulatory landscape continues to evolve with ongoing debates about the implementation of AI regulations and the challenges posed by fast-paced technological advancements. Stakeholders, including governments, tech companies, and civil society, must collaborate to create comprehensive frameworks that not only foster innovation but also protect individuals and society at large. The dialogue around AI regulations will remain pivotal as these technologies shape our future, requiring continuous adaptation to reflect changes in technology and societal values.