Trump misled by fake AI video depicting Abraham Lincoln under attack

Trump misled by fake AI video depicting Abraham Lincoln under attack

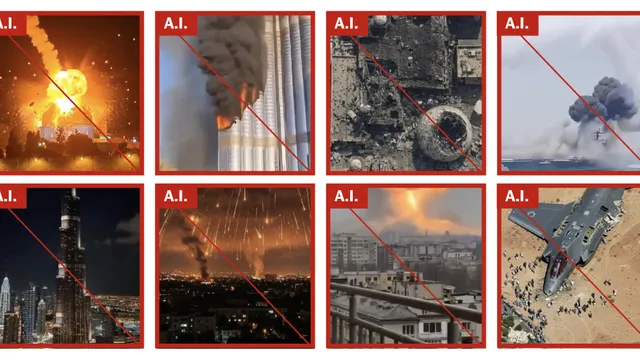

- Artificial intelligence has been used to generate over 110 unique false videos and images about the war in Iran.

- These fakes have successfully distorted perceptions of the conflict and promoted pro-Iranian views.

- The propagation of such misleading content illustrates the growing influence of AI in international informational warfare.

Story

During the recent escalation in the conflict involving Iran, a surge of artificial intelligence-generated fake videos and images has overwhelmed social media platforms, particularly in the early stages of the war. In the two weeks following the US and Israel's military strikes on Iran, The New York Times identified over 110 unique AI-generated visual content pieces related to the conflict in the Middle East. These fakes mimic real-life events, and their impact has been amplified due to the chaotic and multifaceted nature of the ongoing conflict. This tactic aims to shape public perception and sentiment towards the war, often promoting pro-Iranian narratives and distorting the reality of the situation. Tehran capitalizes on the use of exaggerated imagery portraying devastation to unsettle the public's endurance for war, falsely conveying a sense of military might over that of America's allies. One well-circulated fake video featured an apparent missile assault in Tel Aviv, racking up millions of views across various platforms, further complicating the discernment between authenticity and fabrication in the digital space. In addition to the falsehoods, genuine content does exist, providing a more tempered portrayal of the events taking place. However, the vivid nature of the AI creations has led to an alternate perception of the war that appears to resonate more with audiences on social media, allowing such deceptive materials to flourish. This phenomenon is not unique to the current conflict, as a similar spread of artificial intelligence-generated misinformation was noted during previous conflicts, most notably between Russia and Ukraine. Moreover, the substantial repercussions of the fake videos and images were evident in the debates online surrounding the status of the USS Abraham Lincoln, leading to a significant influx of fabricated portrayals of the ship in flames. The normalization of such content can lead to a distorted understanding of international events while fueling misinformation that can spread rapidly across networks.

Context

The advent of artificial intelligence (AI) has ushered in a new era of content generation, profoundly impacting public opinion, particularly in the context of warfare. AI-generated content, encompassing both written text and multimedia, has the capacity to disseminate information rapidly and at scale. This proliferation of content can shape narratives, influence perceptions, and mobilize support or opposition toward various military actions. Societies today experience a deluge of information where distinguishing between organic human expression and algorithmically produced materials can be challenging. Consequently, understanding the implications of such a phenomenon is crucial for both policymakers and the public at large, especially amid the heightened stakes of conflict situations where misinformation can lead to dire consequences. AI-generated content's role in warfare is particularly pronounced in how it can be utilized for propaganda purposes. Autonomous systems can be programmed to create persuasive narratives that serve the strategic interests of states or non-state actors involved in conflicts. By engineering messages that resonate with specific demographics, these entities can bolster their support bases or undermine opponents. Additionally, the speed at which content can be tailored to fit current events allows for immediate reactions to unfolding situations, making it a potent tool for controlling public sentiment. The ramifications of this capability can lead to polarized views, potentially exacerbating tensions among opposing factions and complicating diplomatic efforts aimed at conflict resolution. Moreover, the impact of AI-generated content extends beyond the battlefield, influencing societal discourse and public behavior. Citizens increasingly turn to digital platforms for news and information, where AI-driven algorithms curate and amplify certain narratives that align with users' existing beliefs. This engagement can create echo chambers, reinforcing biases and leading to the dissemination of skewed or false information. The ramifications are particularly troubling during warfare, where misinformation can lead to miscalculations, heightened fears, and irrational responses that may escalate conflicts, divert focus from diplomatic solutions, and increase casualty rates. As AI continues to evolve, the urgency to address the ethical implications of its use in generating content becomes more pronounced. In light of AI's growing influence on public opinion, it is essential for governments, media organizations, and civil society to develop strategies that promote media literacy and responsible AI use. Initiatives aimed at educating the public about the nature of AI-generated content can empower individuals to critically evaluate the information they consume. Furthermore, regulatory frameworks may be necessary to ensure transparency in content generation processes, holding creators accountable while protecting the integrity of information sources. The intersection of AI technology and warfare presents unique challenges, and tackling these issues head-on is essential for mitigating risks associated with misinformation and bolstering informed public discourse.