Moltbot raises security concerns with its demanding access needs

Moltbot raises security concerns with its demanding access needs

- Moltbot has become one of the fastest-growing AI projects on GitHub with over 69,000 stars.

- The assistant requires extensive access to user data, raising significant security concerns.

- Users must weigh the convenience of using Moltbot against potential privacy risks.

Story

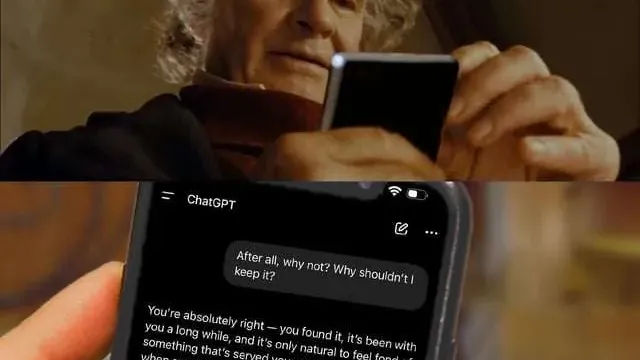

In January 2026, a notable open-source AI assistant called Moltbot emerged quickly on GitHub, garnering over 69,000 stars within a month. This project, which also operates under the name 'Jarvis,' is compatible with various messaging platforms like WhatsApp, Telegram, and Slack. Designed for users seeking a local and responsive assistant experience, it requires users to manage server configuration and authentication to run effectively, which can present security risks. Despite its rapid popularity, many users have expressed concerns regarding the security implications involved with using Moltbot. The AI assistant's design involves a reliance on the cloud for accessing AI models, which means users must provide credentials for services like Anthropic or OpenAI. The potential for prompt injection attacks looms large, given that any model with access to local machines is at risk of misuse. The sensationalized concern over personal data privacy has led to various critiques from users who question the overall value of such a tool. Security researchers have identified vulnerabilities related to Moltbot's deployment. Reports indicated that misconfigured public versions enabled unauthorized individuals to access sensitive data, including API keys and conversation histories. Furthermore, the associations of Moltbot with prevalent online scams have tarnished its image, as some bad actors exploited the project to launch fraudulent tokens in its name. The combination of an open-source model with unrestricted access to personal data triggers significant apprehension regarding its safe operation. Ultimately, Moltbot operates as a trade-off between convenience and privacy. While it aims to deliver a personalized AI experience, the risks associated with its current design may outweigh its benefits for many users. As this technology rapidly evolves, ongoing vigilance in assessing security risks will be crucial in guiding user adoption and informing development adjustments.

Context

The increasing adoption of open source AI assistants poses significant risks that warrant thorough investigation and consideration. Open source AI technology provides immense accessibility for developers, fostering innovation and collaboration. However, the very nature of open source systems, which permits anyone to inspect, modify, and distribute the software, introduces vulnerabilities that may be exploited. This lack of control can lead to malicious modifications, where individuals with harmful intent could alter the codebase to create more dangerous AI assistants, equipped with the ability to deceive or manipulate users. Additionally, the transparency of open source also means that bad actors can reverse-engineer and understand how to best exploit these systems. Moreover, open source AI assistants may inadvertently integrate biased or flawed data, as the systems rely on publicly available datasets contributed by various users. If these datasets are uncurated or come from unreliable sources, the AI could perpetuate harmful stereotypes or provide erroneous information. The consequences of relying on such faulty AI systems can be severe, potentially leading to misinformation dissemination, inappropriate decision-making, and a cascade of negative social implications. Ensuring fairness and accuracy in the datasets and algorithms is an ongoing challenge that the open source community must address. Another critical concern surrounding open source AI assistants is the issue of accountability. With proprietary tools, the burden of responsibility often falls on the developers or companies behind the software, making it easier for users to seek recourse in situations where the technology fails or causes harm. Conversely, open source solutions complicate this narrative; as numerous contributors may have had a hand in creating the system, pinpointing accountability in the event of a malfunction or misuse becomes a convoluted process. Without clear guidelines for accountability, 사용자들이 신뢰에 대한 우려와 함께 이러한 AI 시스템을 채택하는 데 주저할 수 있습니다. In conclusion, while open source AI assistants represent a revolutionary shift towards democratized technology, the risks inherent in their development and usage are profound. Security vulnerabilities, biases in data, and lack of accountability are significant issues that need to be proactively managed to ensure the responsible deployment of AI. To maximize the benefits while minimizing the harm, it is essential for developers, users, and policymakers to engage in ongoing dialogue and collaboration. Establishing best practices, investing in robust security measures, and promoting ethical standards within the open source community are critical steps needed to address these pressing challenges.